Today, many priorities for improvements to teaching and learning are unmet. Educators seek technology-enhanced approaches addressing these priorities that would be safe, effective, and scalable. Like all of us, educators use AI-powered services in their everyday lives, such as voice assistants in their homes; tools that can correct grammar, complete sentences, and write essays; and automated trip planning on their phones. As a result, educators see opportunities to use AI-powered capabilities like speech recognition to increase the support available to students with disabilities, multilingual learners, and others who could benefit from greater adaptivity and personalization in digital tools for learning. They are further exploring how AI can enable writing or improving lessons, as well as their process for finding, choosing, and adapting material for use in their lessons.

Educators are also aware of new risks. Useful, powerful functionality can also be accompanied with new data privacy and security risks. Educators recognize that AI can automatically produce output that is inappropriate or wrong. They are wary that the associations or automations created by AI may amplify unwanted biases. They have noted new ways in which students may represent others’ work as their own. They are well-aware of “teachable moments” and pedagogical strategies that a human teacher can address but are undetected or misunderstood by AI models. They worry whether recommendations suggested by an algorithm would be fair. Educators’ concerns are manifold.

Everyone in education has a responsibility to harness the good to serve educational priorities while also protecting against the dangers that may arise as a result of AI being integrated in educational technology.

NEW!! Report on AI and the Future of Teaching & Learning

Featured Publication

Artificial Intelligence and the Future of Teaching and Learning

The U.S. Department of Education Office of Educational Technology’s new policy report, Artificial Intelligence and the Future of Teaching and Learning: Insights and Recommendations, addresses the clear need for sharing knowledge, engaging educators, and refining technology plans and policies for artificial intelligence (AI) use in education. The report describes AI as a rapidly-advancing set of technologies for recognizing patterns in data and automating actions, and guides educators in understanding what these emerging technologies can do to advance educational goals—while evaluating and limiting key risks.

Key Insights

- AI enables new forms of interaction. Students and teachers can speak, gesture, sketch, and use other natural human modes of communication to interact with a computational resource and each other. AI can generate human-like responses, as well. These new forms of action may provide supports to students with disabilities.

- AI can help educators address variability in student learning. With AI, designers can anticipate and address the long tail of variations in how students can successfully learn—whereas traditional curricular resources were designed to teach to the middle or most common learning pathways. For example, AI-enabled educational technology may be deployed to adapt to each student’s English language abilities with greater support for the range of skills and needs among English learners.

- AI supports powerful forms of adaptivity. Conventional technologies adapt based upon the correctness of student answers. AI enables adapting to a student’s learning process as it unfolds step-by-step, not simply providing feedback on right or wrong answers. Specific adaptations may enable students to continue strong progress in a curriculum by working with their strengths and working around obstacles.

- AI can enhance feedback loops. AI can increase the quality and quantity of feedback provided to students and teachers, as well as suggesting resources to advance their teaching and learning.

- AI can support educators. Educators can be involved in designing AI-enabled tools to make their jobs better and to enable them to better engage and support their students.

Recommendations: Call to Action for Education Leaders

Recommendation #1: Emphasize Humans in the Loop

We reject the notion of AI as replacing teachers. Teachers and other people must be “in the loop” whenever AI is applied in order to notice patterns and automate educational processes. We call upon all constituents to adopt Humans-in-the-Loop as a key criteria.

Recommendation #2: Align AI Models to a Shared Vision for Education

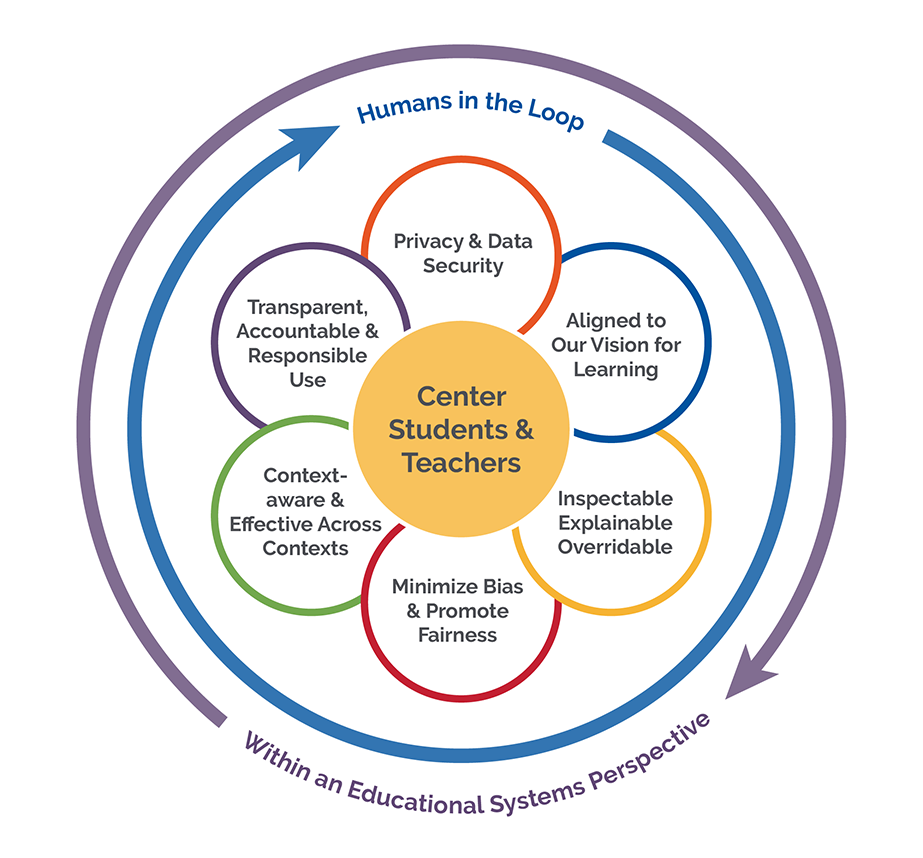

We call upon educational decision makers, researchers, and evaluators to determine the quality of an educational technology based not only on outcomes, but also based on the degree to which the models at the heart of the AI tools and systems align to a shared vision for teaching and learning. The figure to the right describes the important qualities of AI models for education leaders to consider.

Recommendation #3: Design Using Modern Learning Principles

Achieving effective systems requires more than processing “big data”—it requires more than data science. Applications of AI must be based on established, modern learning principles, the wisdom of educational practitioners, and should leverage the expertise in the educational assessment community around detecting bias and improving fairness. Going forward, we also must seek to create AI systems that are culturally responsive and culturally sustaining, leveraging the growth of published techniques for doing so. Further, most early AI systems had few specific supports for students with disabilities and English learners and we must ensure that AI-enabled learning resources are intentionally inclusive of these students.

Recommendation #4: Prioritize Strengthening Trust

Technology can only help us to achieve educational objectives when we trust it. Yet, we learned through a series of public listening sessions that distrust of educational technology and AI is commonplace. Because trust develops as people meet and relate to each other, we call for a focus on building trust and establishing criteria for trustworthiness of emerging educational technologies within the associations, convenings, and professional organizations that bring educators, innovators, researchers, and policymakers together.

Recommendation #5: Inform and Involve Educators

We call on educational leaders to prioritize informing and involving educational constituents so they are prepared to investigate how and when AI fits specific teaching and learning needs, and what risks may rise. Now is the time to show the respect and value we hold for educators by informing and involving them in every step of the process of designing, developing, testing, improving, adopting, and managing AI-enabled educational technology. This includes involving educators in reviewing existing AI-enabled systems, tools, and data use in schools, designing new applications of AI based on teacher input, carrying out pilot evaluations of proposed new instructional tools, collaborating with developers to increase the trustworthiness of the deployed system, and raising issues about risks and unexpected consequences as the system is implemented.

Recommendation #6: Focus R&D on Addressing Context and Enhancing Trust and Safety

Research that focuses on how AI-enabled systems can adapt to context (diversity among learners, variability in instructional approaches, differences in educational settings) is essential to answering the question “Do specific applications of AI work in education, and if so, for whom and under what conditions?” We call upon researchers and their funders to prioritize investigations of how AI can address the long tail of learning variability and to seek advances in how AI can incorporate contextual considerations when detecting patterns and recommending options to students and teachers. Further, researchers should accelerate their attention to how to enhance trust and safety in AI-enabled systems for education.

Recommendation #7: Develop Education-Specific Guidelines and Guardrails

Data privacy regulation already covers educational technology; further, data security is already a priority of school educational technology leaders. Modifications and enhancements to the status quo will be required to address the new capabilities alongside the risks of AI. We call for involvement of all perspectives in the ecosystem to define a set of guidelines (such as voluntary disclosures and technology procurement checklists) and guardrails (such as enhancements to existing regulations or additional requirements) so that we can achieve safe and effective AI for education.

Listening Sessions

The U.S. Department of Education’s Office of Educational Technology, with support from Digital Promise, held listening sessions about Artificial Intelligence (AI). We connected with all constituents involved in making decisions about technology in education, including but not limited to teachers, educational leaders, students, parents, technologists, researchers, and policy makers.

The goal of these listening sessions were to gather input, ideas, and engage in conversations that will help the Department shape a vision for AI policy that is inclusive of cutting-edge research and practices while also informed by the opportunities and risks.

Blog Posts

Defining Artificial Intelligence

In this first of a series of six blog posts, we define AI in three ways, shifting from a view of AI as human-like toward a view of AI that keeps humans in the decision cycle.

New Interactions, New Choices

The first blog post discussed how artificial intelligence (AI) will lead to educational technology products with more independent agency.

Product Roadmaps and the Path to Safe Artificial Intelligence

Use of artificial intelligence systems in school technology is presently light, allowing time for policy to have an impact on safety, equity, and effectiveness.

Engaging Educators

Artificial intelligence (AI) systems for learning environments have traditionally been designed to help students, however new AI systems are being designed to assist or…

Need More Info?

- Contact ed.tech@ed.gov with questions.

- Please Include “OET AI” in the Subject Line

- For a print-out and summary of the Artificial Intelligence Initiative, please review our AI One-pager